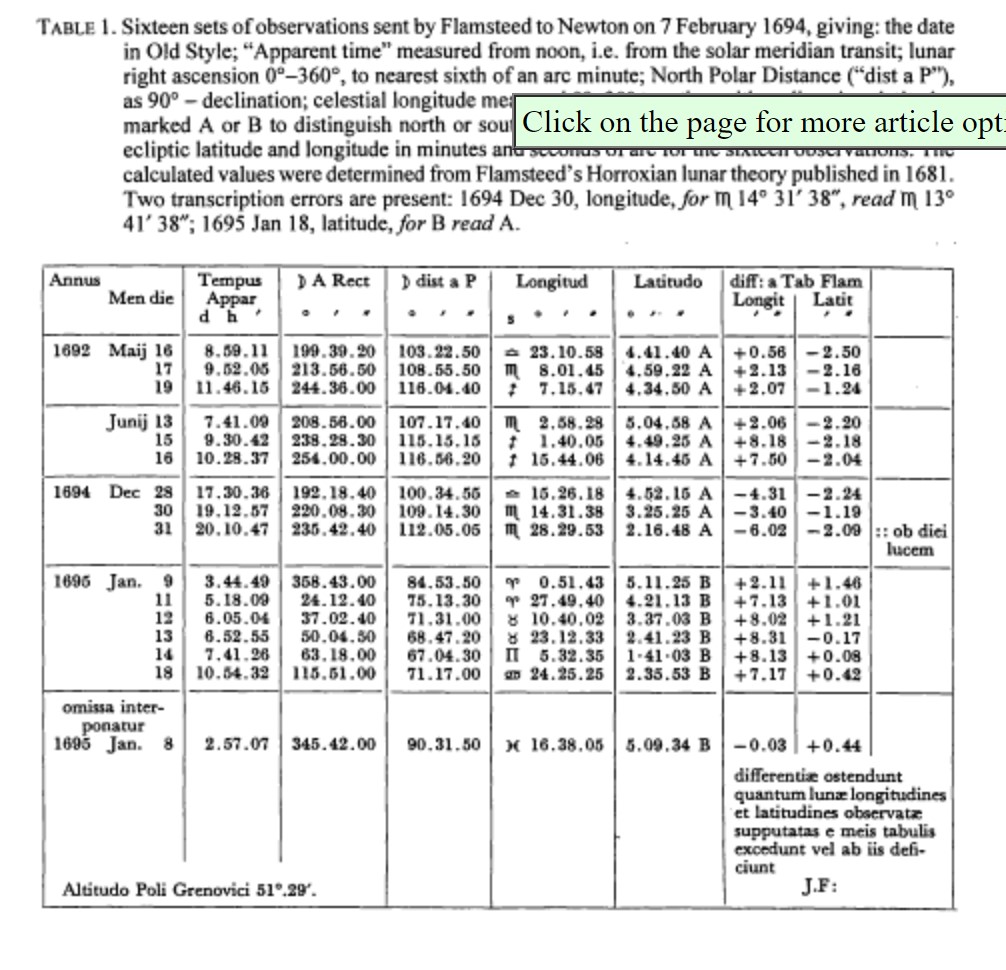

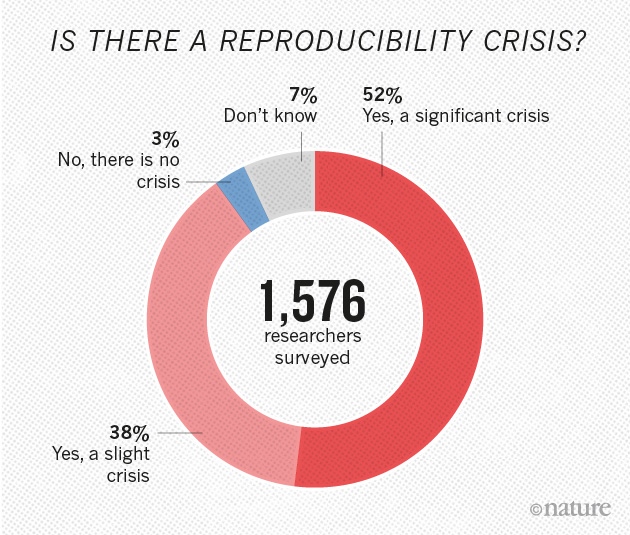

name: title class: left, middle <!--background-image: url(images/rawpixel/nasa-jupiter.jpg) background-size: cover--> <style>.shareagain-bar { --shareagain-foreground: rgb(255, 255, 255); --shareagain-background: rgba(0, 0, 0, 0.5); --shareagain-facebook: none; --shareagain-pinterest: none; --shareagain-reddit: none; }</style> # Reproducibility: historical notes & concept ### [Act I] Setting the stage .large[Reproducible Research Practices (RRP'23) · April 2023] .right[Carlos Granell · Sergi Trilles] .right[Universitat Jaume I] ??? This enhanced color view of Jupiter's south pole was created using data from the JunoCam instrument on NASA's Juno spacecraft. Original from NASA. Source: [Rawpixel](https://www.rawpixel.com/image/440596/jupiters-south-pole) --- class: inverse, bottom, middle ## Focus is the art of knowing what to ignore. .large[The fastest way to raise your level of performance: Cut your number of commitments in half.] --- class: inverse, center, middle # Reproducibility in Science --- class: left # Should we trust science? .huge[Doubt is inherently human and we (scientists) also have doubts!] -- .huge[Karl Pooper's *Conjectures and Refutations*] > .large["At the core of the scientific method is the attempt to refute or disprove theories"] ??? By default, we (scientists) doubt everything. [Karl Popper](https://en.wikipedia.org/wiki/Karl_Popper) --- class: left # What is the scientific method? .huge[Science progresses through *conjectures and refutations*] -- - .large[Scientists are confronted with some question, and offer a possible answer] -- - .large[The answer is a .gray.bg-blue[conjecture] initially (is it right or wrong?)] -- - .large[Scientists do their best to .gray.bg-blue[refute] this conjecture, or prove it wrong] -- - .large[Typically it is refuted, rejected, and replaced by a better one] -- - .large[This too will then be tested, and eventually replaced by an even better one] -- - .large[If scientists have not been able to refute a theory over a long period of time, despite their best efforts, then the theory has been .gray.bg-blue[corroborated]] ??? Source: [Why should we trust science? Because it doesn’t trust itself](https://theconversation.com/why-should-we-trust-science-because-it-doesnt-trust-itself-188988) --- class: left # Advancing science and knowledge... -- .huge[... is all about the **scientific method**!] - .large[For Pooper, the ideas we can most trust are those that have been the most .blue[tried and tested]] -- .huge[... and **dissemination** of scientific results] ??? [Karl Popper](https://en.wikipedia.org/wiki/Karl_Popper) --- class: center, middle # If **science drivers** are/should be ## .gray.bg-blue[Openness], .gray.bg-blue[Transparency], .gray.bg-blue[Honesty], .gray.bg-blue[Integrity], .gray.bg-blue[Reproduction] (test), and .gray.bg-blue[Replication] (cumulative evidence) --- class: center, middle # What are NOT .gray.bg-blue[reproduction & replication] integral parts of the scientific method and of the scientific publications? --- class: inverse, center, middle # Is reproducibility a new problem? --- class: center, middle ## Let's go back to the .gray.bg-blue[ 17th century...] --- class: center # Huygens vs Boyle .pull-left[ <img src="https://upload.wikimedia.org/wikipedia/commons/a/a4/Christiaan_Huygens-painting.jpeg" width="60%"/> [Christiaan Huygens](https://en.wikipedia.org/wiki/Christiaan_Huygens) ] .pull-right[ <img src="https://upload.wikimedia.org/wikipedia/commons/thumb/a/a3/The_Shannon_Portrait_of_the_Hon_Robert_Boyle.jpg/800px-The_Shannon_Portrait_of_the_Hon_Robert_Boyle.jpg" width="60%"/> [Robert Boyle](https://en.wikipedia.org/wiki/Robert_Boyle) ] --- class: center, middle .pull-left[ <br/><br/><br/><br/> .huge[Boyle's [air-pump](https://en.wikipedia.org/wiki/Air_pump) was one of the first documented disputes over reproducibility and the experimental science...] ] .pull-right[ <img src="https://upload.wikimedia.org/wikipedia/commons/3/31/Boyle_air_pump.jpg" width="65%"/> ] --- class: center # Huygens vs Boyle .pull-left[ <img src="https://upload.wikimedia.org/wikipedia/commons/a/a4/Christiaan_Huygens-painting.jpeg" width="35%"/> .large[**Huygens** observed a new effect (*[anomalous suspension](https://www.youtube.com/watch?v=vekG7rotwy4)*) in NL] ] -- .pull-right[ <img src="https://upload.wikimedia.org/wikipedia/commons/thumb/a/a3/The_Shannon_Portrait_of_the_Hon_Robert_Boyle.jpg/800px-The_Shannon_Portrait_of_the_Hon_Robert_Boyle.jpg" width="35%"/> .large[**Boyle** could not replicate this effect in his own air pump in UK] ] -- .huge[Huygens went to UK (1663) to personally help Boyle .gray.bg-blue[replicate] anomalous suspension of water] ??? [Source](https://en.wikipedia.org/wiki/Reproducibility) Note here that non-replication can be good! Here, a new, valid effect during replication was not observed in the original study. That's how science progresses! --- class: center # Newton vs Flamsteed .pull-left[ <img src="https://upload.wikimedia.org/wikipedia/commons/thumb/3/3b/Portrait_of_Sir_Isaac_Newton%2C_1689.jpg/1280px-Portrait_of_Sir_Isaac_Newton%2C_1689.jpg" width="60%"/> [Isaac Newton](https://en.wikipedia.org/wiki/Isaac_Newton) ] .pull-right[ <img src="https://upload.wikimedia.org/wikipedia/commons/1/16/John_Flamsteed_1702.jpg" width="60%"/> [John Flamsteed](https://en.wikipedia.org/wiki/John_Flamsteed) ] --- class: center, middle .pull-left[ <br/><br/><br/><br/> .huge[Flamsteed's [lunar data](https://articles.adsabs.harvard.edu//full/1995JHA....26..237K/0000237.000.html) & Newston's request for raw data...] ] .pull-right[  ] ??? [SAO/NASA Astrophysics Data System (ADS)](https://articles.adsabs.harvard.edu//full/1995JHA....26..237K/0000237.000.html) --- name: newton class: center # Newton vs Flamsteed .large[In 1695, Sir Isaac Newton wrote a letter to the British Astronomer Royal John Flamsteed, whose data on lunar positions he was trying to get for more than half a year...] -- .large[Newton wrote that...] > .large[“these and all your communications will be useless to me unless you can propose some practicable way or other of supplying me with observations … .gray.bg-blue[I want not your calculations, but your observations only].”] .small[<a name=cite-noy2019></a>[[NN19](http://doi.org/10.1038/s41563-019-0539-5)]] ??? [Kollerstrom, N. & Yallop, B. D. J. Hist. Astron. 26, 237–246 (1995)](https://doi.org/10.1177%2F002182869502600303). --- class: inverse, center, middle # Is reproducibility a new problem? --- class: center, middle ## Let's go back to the .gray.bg-blue[19th century] --- class: left # The case of Arman Fizeau .pull-left[ .center[ <img src="https://upload.wikimedia.org/wikipedia/commons/thumb/7/73/Armand_Hippolyte_Louis_Fizeau_by_Eug%C3%A8ne_Pirou_-_Original.jpg/480px-Armand_Hippolyte_Louis_Fizeau_by_Eug%C3%A8ne_Pirou_-_Original.jpg" width="40%"/> [Armand Fizeau](https://en.wikipedia.org/wiki/Hippolyte_Fizeau)] ] .pull-right[ - .large[Experimental physicist in Paris] - .large[His speciality was refining and confirming other people's results] - .large[This is the soul of science because there is no such thing as a fact that cannot be independently corroborated] ] .center.huge[[How simple ideas lead to scientific discoveries](https://www.youtube.com/watch?v=F8UFGu2M2gM)] --- class: inverse, center, middle # Is reproducibility a new problem? --- class: center, middle ## Let's go back to .gray.bg-blue[40 years ago], with the birth of personal computers... --- class: left # Literate programming .small[<a name=cite-knuth1984></a>[[Knu84](https://doi.org/10.1093/comjnl/27.2.97)]] .pull-left[ .huge[[Prose & code together](http://www.literateprogramming.com/)] - .large[Code embedded within the program's documentation as opposed to documentation embedded within code.] - .large[Combines .gray.bg-blue[programming language] (R, python) with .gray.bg-blue[documentation language] (TeX, LaTeX, Markdown).] ] .pull-right[ <img src="https://upload.wikimedia.org/wikipedia/commons/4/4f/KnuthAtOpenContentAlliance.jpg" width="60%" style="display: block; margin: auto;" /> .center[[Donald E Knuth](https://en.wikipedia.org/wiki/Donald_Knuth)] ] --- # Dynamic documentation (Act III) .large[.gray.bg-blue[constant change]: anytime that the underlying data, analysis, or code change, the report itself is automatically updated] -- .pull-left[ .large[SWEAVE (2002) by Friedrich Leisch allowed R code to be embedded within lay LaTex documents] ] -- .pull-right[ .large[Language-specific tools to enable interactive or “live” notebooks]: - [R Markdown](https://rmarkdown.rstudio.com/) - [Jupyter Notebooks](https://jupyter.org/) - [Wolfram Notebooks](https://www.wolfram.com/notebooks/) - [Google Colab](https://colab.research.google.com/) - [Quarto](https://quarto.org/): Python, R, Julia and Observable ] --- class: inverse, center, middle # Is reproducibility a new problem? --- class: center, middle ## Let's go back to .gray.bg-blue[30 years ago], with the birth of the Web... --- class: center, middle > .huge[Today, few published results are reproducible in any practical sense. To verify them requires almost as much effort as it took to create them originally. After a time, authors are often unable to reproduce their own results! For these reasons, many people ignore most of the literature. ] .right[Jon Claerbout, *Earth Sounding Analysis*] ??? John Claerbout revised his book *Earth Soundings Analysis* with a valid complaint. --- class: left ### .small[<a name=cite-claerbout1992></a>[[CK92](https://doi.org/10.1190/1.1822162)]] - _Electronic documents give reproducible research a new meaning_ .pull-left[ - .large[Merge publication with underlying computational analysis] - .large[Executable digital notebook] - .large[Be open & help others] - .large[Document for future self] ] .pull-right[ <img src="images/Claerbout92.png" width="60%" style="display: block; margin: auto;" /> ] --- class: inverse, center, middle # Is reproducibility a new problem? --- class: center, middle ## Let's go back to .gray.bg-blue[Sep 15, 2022] --- class: left ### Facts .large[Rubén Herzog, Universidad de Valparaíso in Chile, published a 2020 [article](https://www.nature.com/articles/s41598-020-74060-6) in Scientific Reports] -- .large[Paul Lodder, a grad student at the University of Amsterdam, wanted to expand on the model used by Rubén] -- .large[Paul reproduced the paper's analysis results before looking into expanding...and got .gray.bg-blue[different results]] > I (Paul) shared my findings with Rubén by writing up a .gray.bg-blue[literate programming report], which allowed me to present my findings in a reproducible manner by including the code that leads to any of the results discussed. > Because I (Paul) was able to run his code and mine on the same simulated data, it was very clear that the typo caused the results from the paper ??? Full story on [Retraction Watch](https://retractionwatch.com/): - [A grad student finds a ‘typo’ in a psychedelic study’s script that leads to a retraction](https://retractionwatch.com/2022/10/06/a-grad-student-finds-a-typo-in-a-psychedelic-studys-script-that-leads-to-a-retraction/#more-125759) - [‘A display of extreme academic integrity’: A grad student who found a key error praises the original author](https://retractionwatch.com/2022/10/11/a-display-of-extreme-academic-integrity-a-grad-student-who-found-a-key-error-praises-the-original-author/) --- class: left ### Lessons .huge[.gray.bg-blue[Academic integrity & honesty] through <br/> .gray.bg-blue[transparent and reproducible computational code]] - .large[Both collaborated to find out the typo] - .large[Paper is now retracted, but both are working in a new version of the paper] - .large[Science wins!] ??? Full story on [Retraction Watch](https://retractionwatch.com/): - [A grad student finds a ‘typo’ in a psychedelic study’s script that leads to a retraction](https://retractionwatch.com/2022/10/06/a-grad-student-finds-a-typo-in-a-psychedelic-studys-script-that-leads-to-a-retraction/#more-125759) - [‘A display of extreme academic integrity’: A grad student who found a key error praises the original author](https://retractionwatch.com/2022/10/11/a-display-of-extreme-academic-integrity-a-grad-student-who-found-a-key-error-praises-the-original-author/) --- class: inverse, center, middle # Is reproducibility a new problem? --- class: center, middle ## No, it is not .gray.bg-blue[NEW], but it .gray.bg-blue[IS still a problem]. --- class: left, bottom background-image: url(images/supertramp.jpg) background-size: contain --- class: left ### .small[<a name=cite-alsheikhAli2011></a>[[Als+11](https://doi.org/10.1371/journal.pone.0024357)]] - _Public Availability of Published Research Data in High-Impact Journals_ .huge[Assessed 500 papers] - .large[149 did not subject to any data availability policy] - .large[208 did not adhere to data availability instructions] - .large[143 adhered to minimum requirements] - .large[47 deposited full primary data (~9%)] --- class:left ### .small[<a name=cite-baker2015></a>[[Bak15](https://doi.org/10.1038/nature.2015.17433)]] - _First results from psychology's largest reproducibility test_ .pull-left[ - .large[Only 39 out ot 100 of the published studies in psychology could be reproduced] ] .pull-right[ <img src="images/replicatation-graphic-b.png" width="65%" /> ] --- class: left ### Reproducibility issues are well covered... - .large[in __science studies__ in general, across various disciplines] .small[<a name=cite-ioannidis2005></a>[[Ioa05](https://doi.org/10.1371/journal.pmed.0020124)]]: _Why most published research findings are false_ - .large[in __economics__] .small[<a name=cite-ioannidis2017></a>[[ISD17](https://doi.org/10.1111/ecoj.12461)]]: _The Power of Bias in Economics Research_ - .large[in __medical chemistry__] .small[<a name=cite-baker2017></a>[[Bak17](https://doi.org/10.1038/548485a)]]: _Check your chemistry_ - .large[in __neuroscience__] .small[<a name=cite-button2013></a>[[But+13](https://doi.org/10.1038/nrn3475)]]: _Power failure: why small sample size undermines the reliability of neuroscience_ --- class: left ### .small[<a name=cite-baker2016></a>[[Bak16](http://doi.org/10.1038/533452a)]] - _1,500 scientists lift the lid on reproducibility_ .pull-left[ - .large[+70% of researchers have tried and failed to reproduce another scientist's experiments] - .large[+50% have failed to reproduce their own experiments] ] .pull-right[  ] --- class: left ### .small[[[Bak16](http://doi.org/10.1038/533452a)]] - Limitations & enablers .pull-left[ <img src="images/reproducibility-graphic-online4.jpg" width="65%" style="display: block; margin: auto;" /> ] .pull-right[ <img src="images/reproducibility-graphic-online5.jpg" width="70%" style="display: block; margin: auto;" /> ] --- class: left ### .small[<a name=cite-fanelli2018></a>[[Fan18](https://doi.org/10.1073/pnas.1708272114)]] - _Opinion: Is science really facing a reproducibility crisis, and do we need it to?_ .huge[Is “science is in crisis” narrative wrong?] -- > .large[The new “science is in crisis” narrative is not only empirically unsupported, but also quite obviously counterproductive. Instead of inspiring younger generations to do more and better science, it might foster in them cynicism and indifference. Instead of inviting greater respect for and investment in research, it risks discrediting the value of evidence and feeding antiscientific agendas.] --- class: left, ### .small[[[Fan18](https://doi.org/10.1073/pnas.1708272114)]] - _Opinion: Is science really facing a reproducibility crisis, and do we need it to?_ .huge[“science is in crisis” narrative is wrong?] > .large[Therefore, contemporary science could be more accurately portrayed as facing “new opportunities and challenges” or even a “revolution”. .gray.bg-blue[Efforts to promote transparency and reproducibility would find complete justification in such a narrative of transformation and empowerment], a narrative that is not only more compelling and inspiring than that of a crisis, but also better supported by evidence.] --- class: inverse, center, middle # The concept of reproduction --- class: left # .center[Today's reality] - .huge[.gray.bg-blue[Computation] has an increasing role in scientific research] .small[<a name=cite-stodden2014></a>[[SM14](https://doi.org/10.5334/jors.ay)]] - .huge[Many and diverse .gray.bg-blue[computational] sciences (bio-informatics, geophysics, material science, fluid mechanics, climate modelling, computational chemistry, ...)] .small[<a name=cite-barba2021></a>[[Bar21](https://ieeexplore.ieee.org/document/9364769/)]] - .huge[As results are increasingly produced by complex .gray.bg-blue[computational] processes...] --- class: center, middle ## ...the traditional .gray.bg-blue[methods] section <br/> of a scientific paper is <br/> .gray.bg-blue[no longer sufficient] --- class: left ### .small[<a name=cite-nust2021></a>[[NE21](https://f1000research.com/articles/10-253/v1)]] - _The inverse problem_  --- name: stark2018 class: left ### .small[<a name=cite-stark2018></a>[[Sta18](https://doi.org/10.1038/d41586-018-05256-0)]] - _'Show me', not 'trust me'_ .pull-left[ - .large['Show me' = help me if you can] > "If I say: ‘here’s my work’ and it’s wrong, I might have erred, but at least I am honest". - .large['Trust me' = catch me if you can] > "If I publish a paper long on results but short on methods, and it’s wrong, that makes me untrustworthy." ] .pull-right[ <img src="images/preproducibility.png" width="80%" style="display: block; margin: auto;" /> ] --- background-image: url(images/turingway_reproducibility.jpg) background-size: contain ??? [The Turing Way Community](https://the-turing-way.netlify.app/reproducible-research/reproducible-research.html) --- name: matrix_definitions ### .small[<a name=cite-turingway2019></a>[[The19](https://the-turing-way.netlify.app/welcome.html)]] - The Turing Way Community <img src="images/turingway_reproducible-matrix.jpg" width="85%" style="display: block; margin: auto;" /> ??? [The Turing Way Community](https://the-turing-way.netlify.app/reproducible-research/overview/overview-definitions.html) --- ### .small[<a name=cite-donoho2009></a><a name=cite-peng2011></a><a name=cite-leek2017></a><a name=cite-barba2018></a>[[CK92](https://doi.org/10.1190/1.1822162); [Don+09](https://doi.org/10.1109/MCSE.2009.15); [Pen11](https://doi.org/10.1126/science.1213847); [LJ17](https://doi.org/10.1146/annurev-statistics-060116-054104); [Bar18](https://arxiv.org/abs/1802.03311)]] - {Re}* terms - .large[__Reproducible research__: Authors provide all the necessary data and the computer codes to run the analysis again, re-creating the results.] - .large[__Reproducibility__: A study is reproducible if all of the code and data used to generate the numbers and figures in the paper are available and exactly produce the published results.] - .large[__Replication__: A study that arrives at the same scientific findings as another study, collecting new data (possibly with different methods) and completing new analyses.] --- ### .small[[[CK92](https://doi.org/10.1190/1.1822162); [Don+09](https://doi.org/10.1109/MCSE.2009.15); [Pen11](https://doi.org/10.1126/science.1213847); [LJ17](https://doi.org/10.1146/annurev-statistics-060116-054104); [Bar18](https://arxiv.org/abs/1802.03311)]] - {Re}* terms - .large[__Replicability__: A study is replicable if an identical experiment can be performed like the first study and the statistical results are consistent.] - .large[__False discovery__: A study is a false discovery if the result presented in the study produces the wrong answer to the question of interest.] --- class: left ### .small[<a name=cite-ostermann2017></a><a name=cite-nust2018></a><a name=cite-ostermann2021></a>[[OG17](https://doi.org/10.1111/tgis.12195); [Nus+18](https://doi.org/10.7717/peerj.5072); [Ost+21](https://drops.dagstuhl.de/opus/volltexte/2021/14761)]] - Our view .pull-left[ > .large[A reproducible paper ensures a reader can recreate the computational workflow of a study, including the .gray.bg-blue[prerequisite knowledge] and the .gray.bg-blue[computational environment].] > - The former implies the scientific argument to be understandable and sound. > - The latter requires a detailed description of used software and data, and both being openly available. ] .pull-right[ <img src="images/AGILE00-logo-square.svg" width="70%" style="display: block; margin: auto;" /> .center[[Reproducible AGILE](https://reproducible-agile.github.io/)] ] --- class: center, middle ## We define .gray.bg-blue[reproducibility] to mean --- class: center, middle # .gray.bg-blue[computacional] reproducibility --- class: center ### .small[[[Pen11](https://doi.org/10.1126/science.1213847)]] - _Reproducible Research in Computational Science_ <img src="images/spectrumreproducibility.jpg" width="90%" style="display: block; margin: auto;" /> ??? Linked to criteria assessment of reproducibility --- class: left, middle # Summary .huge[Reproducibility involves the .gray.bg-blue[ORIGINAL] data and code] .huge[Replicability involves .gray.bg-blue[NEW] data and/or methods] --- # References .tiny[ <a name=bib-knuth1984></a>[Knuth, DE](#cite-knuth1984) (1984). "Literate Programming". In: _The Computer Journal_ 11.2, pp. 97-111. URL: [https://doi.org/10.1093/comjnl/27.2.97](https://doi.org/10.1093/comjnl/27.2.97). <a name=bib-claerbout1992></a>[Claerbout, JF and M Karrenbach](#cite-claerbout1992) (1992). "Electronic documents give reproducible research a new meaning". In: _SEG Technical Program Expanded Abstracts 1992_ , pp. 601-604. URL: [https://doi.org/10.1190/1.1822162](https://doi.org/10.1190/1.1822162). <a name=bib-ioannidis2005></a>[Ioannidis, JP](#cite-ioannidis2005) (2005). "Why most published research findings are false". In: _PLOS Medicine_ 2.8, p. e124. URL: [https://doi.org/10.1371/journal.pmed.0020124](https://doi.org/10.1371/journal.pmed.0020124). <a name=bib-donoho2009></a>[Donoho, DL, A Maleki, et al.](#cite-donoho2009) (2009). "Reproducible research in computational harmonic analysis". In: _Computing in Science & Engineering_ 11.1, pp. 8-18. URL: [https://doi.org/10.1109/MCSE.2009.15](https://doi.org/10.1109/MCSE.2009.15). <a name=bib-alsheikhAli2011></a>[Alsheikh-Ali, Alawi A., Waqas Qureshi, et al.](#cite-alsheikhAli2011) (2011). "Public Availability of Published Research Data in High-Impact Journals". In: _PLOS ONE_ 6.9, pp. 1-4. URL: [https://doi.org/10.1371/journal.pone.0024357](https://doi.org/10.1371/journal.pone.0024357). <a name=bib-button2013></a>[Button, KS, JPA Ioannidis, et al.](#cite-button2013) (2013). "Power failure: why small sample size undermines the reliability of neuroscience". In: _Nature Reviews Neuroscience_ 14.5, pp. 365-376. URL: [https://doi.org/10.1038/nrn3475](https://doi.org/10.1038/nrn3475). <a name=bib-baker2015></a>[Baker, M](#cite-baker2015) (2015). _First results from psychology's largest reproducibility test_. URL: [https://doi.org/10.1038/nature.2015.17433](https://doi.org/10.1038/nature.2015.17433). <a name=bib-baker2016></a>[\-\-\-](#cite-baker2016) (2016). "1,500 scientists lift the lid on reproducibility". In: _Nature_ 533.7604, pp. 452-454. URL: [http://doi.org/10.1038/533452a](http://doi.org/10.1038/533452a). <a name=bib-baker2017></a>[\-\-\-](#cite-baker2017) (2017). "Check your chemistry". In: _Nature_ 548.7668, pp. 485-488. URL: [https://doi.org/10.1038/548485a](https://doi.org/10.1038/548485a). <a name=bib-ioannidis2017></a>[Ioannidis, JP, TD Stanley, et al.](#cite-ioannidis2017) (2017). "The Power of Bias in Economics Research". In: _The Economic Journal_ 127.605, pp. F236-F265. URL: [https://doi.org/10.1111/ecoj.12461](https://doi.org/10.1111/ecoj.12461). <a name=bib-leek2017></a>[Leek, JT and LR Jager](#cite-leek2017) (2017). "Is Most Published Research Really False?" In: _Annual Review of Statistics and Its Application_ 4, pp. 109-122. URL: [https://doi.org/10.1146/annurev-statistics-060116-054104](https://doi.org/10.1146/annurev-statistics-060116-054104). <a name=bib-barba2018></a>[Barba, L](#cite-barba2018) (2018). _Terminologies for Reproducible Research_. URL: [https://arxiv.org/abs/1802.03311](https://arxiv.org/abs/1802.03311). <a name=bib-fanelli2018></a>[Fanelli, D](#cite-fanelli2018) (2018). "Opinion: Is science really facing a reproducibility crisis, and do we need it to?" In: _Proceedings of the National Academy of Sciences_ 115.11, pp. 2628-2631. URL: [https://doi.org/10.1073/pnas.1708272114](https://doi.org/10.1073/pnas.1708272114). <a name=bib-noy2019></a>[Noy, Natasha and Aleksandr Noy](#cite-noy2019) (2019). "Let go of your data". In: _Nature Materials_ 19.1, pp. 128-128. URL: [http://doi.org/10.1038/s41563-019-0539-5](http://doi.org/10.1038/s41563-019-0539-5). <a name=bib-barba2021></a>[Barba, Lorena A](#cite-barba2021) (2021). "Trustworthy Computational Evidence Through Transparency and Reproducibility". In: _Computing in Science & Engineering_ 23.1, pp. 58-64. URL: [https://ieeexplore.ieee.org/document/9364769/](https://ieeexplore.ieee.org/document/9364769/). ] --- # References .tiny[ <a name=bib-peng2011></a>[Peng, RD](#cite-peng2011) (2011). "Reproducible Research in Computational Science". In: _Science_ 334.6060, pp. 1226-1227. URL: [https://doi.org/10.1126/science.1213847](https://doi.org/10.1126/science.1213847). <a name=bib-stodden2014></a>[Stodden, V and SB Miguez](#cite-stodden2014) (2014). "Best Practices for Computational Science: Software Infrastructure and Environments for Reproducible and Extensible Research". In: _Journal of Open Research Software_ 2.1, p. e21. URL: [https://doi.org/10.5334/jors.ay](https://doi.org/10.5334/jors.ay). <a name=bib-ostermann2017></a>[Ostermann, FO and C Granell](#cite-ostermann2017) (2017). "Advancing science with VGI: Reproducibility and replicability of recent studies using VGI". In: _Transactions in GIS_ 21.2, pp. 224-237. URL: [https://doi.org/10.1111/tgis.12195](https://doi.org/10.1111/tgis.12195). <a name=bib-nust2018></a>[Nust, Daniel, C Granell, et al.](#cite-nust2018) (2018). "Reproducible research and GIScience: an evaluation using AGILE conference papers". In: _PeerJ_ 6, p. e5072. URL: [https://doi.org/10.7717/peerj.5072](https://doi.org/10.7717/peerj.5072). <a name=bib-stark2018></a>[Stark, PB](#cite-stark2018) (2018). "Before reproducibility must come preproducibility". In: _Nature_ 557.7706, pp. 613-614. URL: [https://doi.org/10.1038/d41586-018-05256-0](https://doi.org/10.1038/d41586-018-05256-0). <a name=bib-turingway2019></a>[The Turing Way Community](#cite-turingway2019) (2019). _The Turing Way: A Handbook for Reproducible Data Science_. URL: [https://the-turing-way.netlify.app/welcome.html](https://the-turing-way.netlify.app/welcome.html). <a name=bib-nust2021></a>[Nüst, Daniel and Stephen J Eglen](#cite-nust2021) (2021). "CODECHECK: an Open Science initiative for the independent execution of computations underlying research articles during peer review to improve reproducibility". In: _F1000Research_ 10, p. 253. ISSN: 2046-1402. URL: [https://f1000research.com/articles/10-253/v1](https://f1000research.com/articles/10-253/v1). <a name=bib-ostermann2021></a>[Ostermann, Frank O., Daniel Nüst, et al.](#cite-ostermann2021) (2021). "Reproducible Research and GIScience: An Evaluation Using GIScience Conference Papers". In: _11th International Conference on Geographic Information Science (GIScience 2021) - Part II_. Vol. 208. Dagstuhl, Germany, pp. 2:1-2:16. ISBN: 978-3-95977-208-2. URL: [https://drops.dagstuhl.de/opus/volltexte/2021/14761](https://drops.dagstuhl.de/opus/volltexte/2021/14761). ]